The Writer’s Room

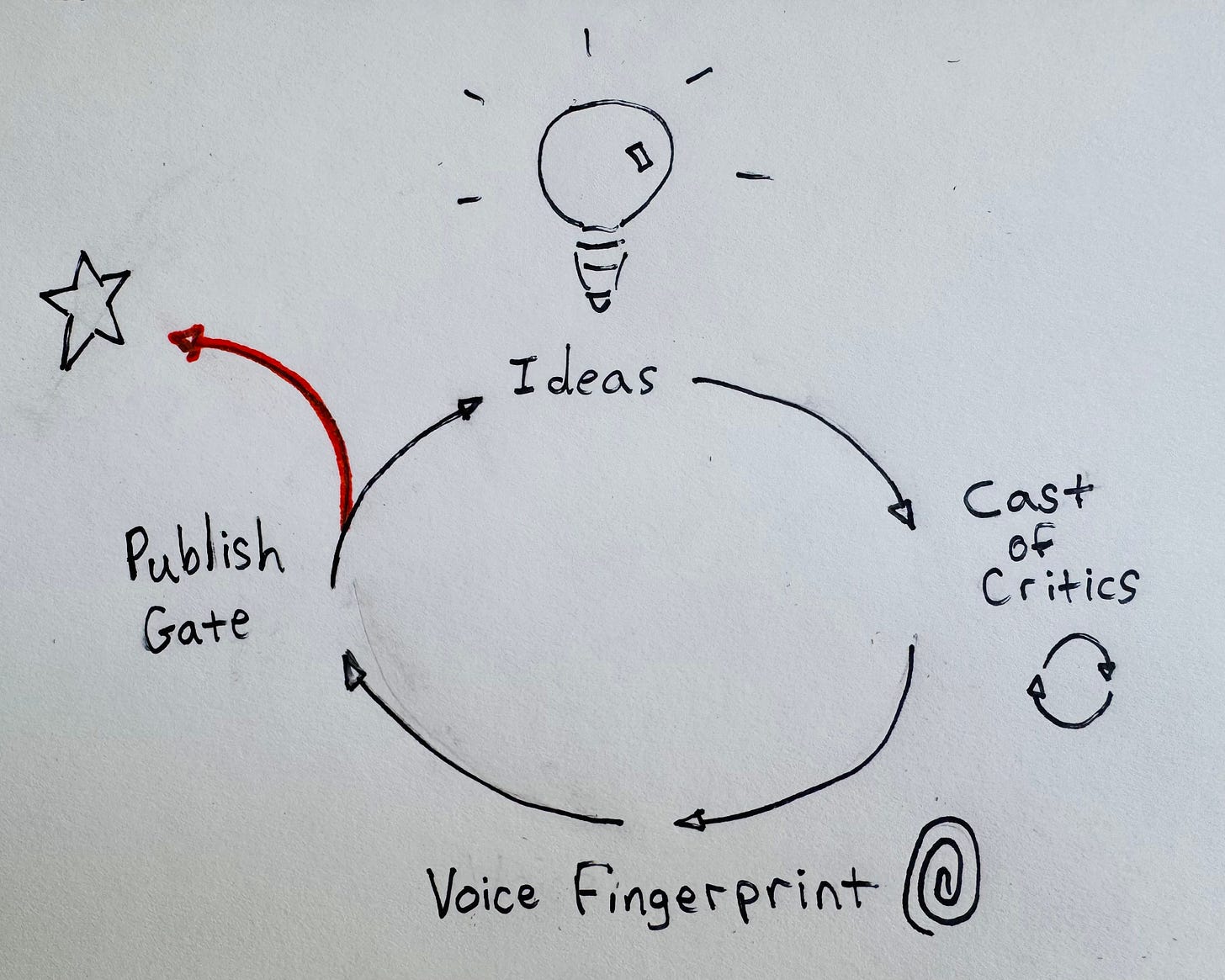

How I built an AI editorial system to amplify critique and pushback

I write with AI. When writing an article, the work is what I reject.

AI produces drafts that come back coherent but voiceless. Framings that are plausible but not quite right. Arguments assembled correctly that don’t say anything worth saying. What you do with those — whether you accept them or push back, redirect, override — is where the writing actually happens.

I’ve started thinking of myself less as a writer in this process and more as an editorial director.

The ideas are mine — the questions, the hypotheses, the things I’ve noticed that feel worth saying. Those come before AI is involved at all. What I’m directing is how those ideas get shaped, challenged, and expressed. That distinction matters to me, and I think it matters for how you read what follows.

This is my twelfth article on Substack in two months, every one produced in collaboration with Claude. I’ve built a set of custom tools — Claude Skills in a private GitHub repository — specifically designed to make that process consistent and rigorous. When people assume AI-assisted writing means the AI writes and the human prompts, my experience runs the other way. I interrogate the drafts, redirect them, override them. I wrote about this in a piece on gravitational pull and escape velocity — uncritical AI use pulls work toward competent and generic. The tools I’ve built are designed to push back against that.

And I almost never accept what Claude gives me.

Effective and Efficient Are Different Problems.

The conventional use case for AI writing tools is efficiency: produce more, faster, with less effort. That wasn’t the problem I was trying to solve. I needed to produce work that was stronger — more defensible, more specific, a clearer expression of my actual thinking, more signal, less noise. Those are different problems. They require different solutions.

I’ve tried keeping a journal or a blog before. Several times, over many years. I’ve failed every time — not for lack of ideas, but for lack of a system that made consistency possible and made the output worth the effort. The question I kept running into wasn’t “do I have something to say?” It was “how do I say it in a way that holds together and that I’m proud of?”

My answer was to build tools around the process I was already doing. I was already asking AI to read a paragraph as a CPO would, or to find the arguments that wouldn’t survive a hostile read. The insight was that I could turn that into a repeatable system — run it the same way every time, stop rebuilding it from scratch in every session — and something that got better with every article I published.

That’s what I built. It’s not an “easy button.” It’s a system for doing the hard work.

The Cast of Critics

The first thing I built was what I call the Review Board — a Cast of Critics. Named personas representing the people whose judgment matters most: a CPO evaluating whether the argument demonstrates real product thinking, an executive recruiter reading for professional positioning, an editorial executive stress-testing argument rigor, a communications strategist asking what the worst-case read is.

In adversarial mode, their job isn’t to improve the piece. It’s to find what fails.

The sequencing matters as much as the personas. The adversarial pass runs before the polish pass. Always. If you polish an argument before testing whether it survives scrutiny, you’ve invested effort in something that may need to be rebuilt from scratch. Running that stress-test on an outline, before any prose exists, is where structural problems get caught early.

This piece went through that process. The adversarial panel caught a critical framing problem: I was leading with voice and copy-editing details rather than the substantive claim about argument construction. The piece you’re reading is different — and more defensible — because of that catch.

The clearest example from my published work: Nobody Else Knows What They’re Doing Either, a piece I wrote during the job search about navigating professional uncertainty. The adversarial pass caught it immediately: this could read as a laid-off executive rationalizing his situation. “Sour grapes” was the phrase. I hadn’t seen it. The piece that published had a different opening, a different proof structure, a different framing of its central claim. More defensible. Truer to what I actually wanted to say.

Professional writers have always had rooms like this. Showrunners have writers’ rooms. Public figures have communications teams. Executives have advisors who will tell them what they don’t want to hear. AI makes something equivalent available to one person working alone. My Cast of Critics isn’t fixed — it has standing members who show up for most pieces and others who get brought in or excused depending on the topic or the artifact. I’ve run the Cast of Critics on articles, LinkedIn posts, project briefs, and even other Claude Skills. Each session starts by naming who’s in the room. Each session ends when the panel stops finding things that matter.

The Voice Fingerprint

The second thing I built was an Editorial Voice skill — a style guide built from my own published work.

The hardest thing to demonstrate about this skill is exactly what makes it valuable. It doesn’t produce something I can easily measure. What it catches is the gap between “sounds like competent writing” and “sounds like me” — real, but hard to show as a before/after. It catches the places where a draft sounds fluent but generic, where the sentences hold together but my voice has left the room.

What it captures isn’t my writing itself. It’s the patterns in my writing: how I structure an argument, where I put personal moments, how I connect a broad claim to a specific detail. Building it required me to name things I’d been doing without thinking — which turned out to be useful on its own.

You can’t build a system around something you haven’t articulated.

That process changed how I write, not just how I edit.

The evidence is the response from readers. Colleagues sharing articles with people they think should read them. Hiring managers referencing specific pieces in conversations. People who’ve read earlier pieces recognizing these articles as mine. That’s what the skill protects — not elegance, but credibility. The difference between content that could have come from anyone and writing that could only have come from me.

The Publish Gate

The third thing I built was a Publish Gate — a pre-publication check against a rubric I wrote for myself. Ten criteria. Three non-negotiable gates: authenticity, earned wisdom, and what I call the AI dismissal test.

The AI dismissal test is the one that matters most for my situation. The question isn’t whether AI was involved in producing this piece. The question is: what in this piece proves my irreplaceable contribution? Not “my experience is evident throughout” — that’s not an answer. The answer has to be specific: this paragraph where I describe something I actually built, this data point from something I actually measured, this admission that cost something to put on the page.

The gate is not a rubber stamp. It can return HOLD. The first time I used it on What You Do When Things Go Wrong — And When They Go Right it came back “Ready with Caveat.” The caveat was precise: the piece had two honest personal moments, but they weren’t equal. One side of the framework had a specific owned story. The other was acknowledged but not inhabited. That pushed me to find the missing moment — a manager quote that stuck with me: “It might not be your fault, but it is your problem.” That line, and the story around it, didn’t exist in any prior draft. The gate found this gap and pushed me to go deeper. The piece that published was more complete because of it.

I’m two months in with Substack. Thirty-seven subscribers. It’s early, and I know it. I wrote about why I’m doing this in You’re In the Creator Economy Now: in a job market where the standard playbook — polish the résumé, apply to jobs, wait for callbacks — no longer works the way it once did, building a public body of work is one of the few things that still generates real signal. The subscriber count isn’t the measure. The conversations are.

The referrals. The hiring managers who’ve read a piece and reached out. The colleagues who’ve shared something I wrote with their teams and their bosses. The job interviews where I’ve followed up with a link to something that goes deeper on a topic we’d discussed. That’s what moves the needle, even when it’s hard to track.

The system didn’t make me faster. It made the work more rigorous. Every piece goes through roughly ten rounds of substantive revision — argument challenges, stress-tests, voice calibration, a final quality check. The result is stronger than anything I’d have written alone, and it’s the first time I’ve sustained a writing practice longer than a few weeks.

There’s a version of AI-assisted writing that produces a lot of content with minimal human involvement. It’s out there. Fluent, plausible, and indistinguishable from everything else. What I’m trying to build is the opposite — a system where AI amplifies the challenge and critique, and I make every editorial call. The goal isn’t to remove myself from the process. It’s to produce a stronger body of work — and I think that distinction is worth drawing clearly, whatever tools you’re using.

The gravitational pull toward the mean is real. The tools that make content easier to produce are the same tools that make it easier to produce work that isn’t actually yours. The question isn’t whether to use AI. It’s whether the output is worth putting your name on it.